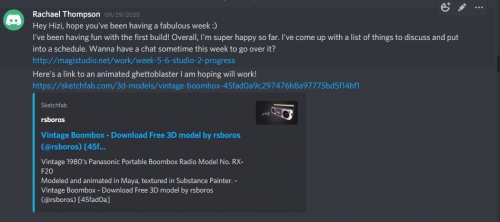

Have been having weekly catch ups with Hizi over Discord (msgs in between) and we've been looking at the Unity project and discussing/making changes as we go.

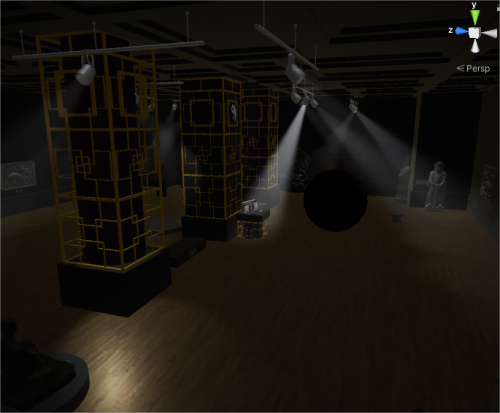

Hizi and I have discussed at length the pros and cons of using the camera feed and overlaying/placing the 'museum' objects in the user's real environment vs placing them in a whole immersive AR scene.

At this stage, we have decided to go with the whole immersive AR scene because we feel this will make for a more immersive experience as the AR objects and the AR environment will match. Howvever in future I would like to test new technology that would allow the lines between the real world and the augmented to become less clear. For example, how far can we push this, to integrate AR and the real world without it looking gimmicky/fake?

In additiion to this, further progress has been made in relation to:

I've made a list of things to fix/change and Hizi has put these into a spreadsheet. We then discussed the order of priority. You can see this the screen capture of the spreadsheet.

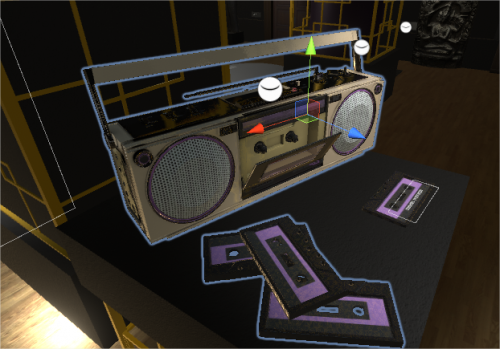

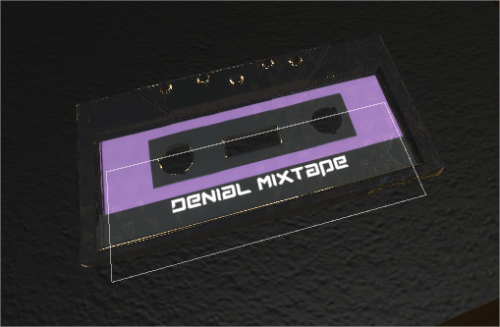

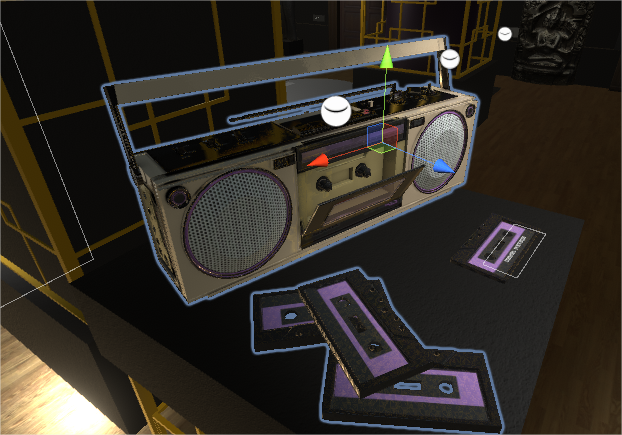

I've managed to get a new ghettoblaster asset by contacting a student artist on sketchfab. Hizi has manged to animate it!

I've been working on narration : tightening up the script and have hired Zulya (a performer) to do the voiceover and - if time allows - video as well as I think it would be good to see her at the start of the experience before she becomes a disembodied voice.

Zulya and I have had discussions about the character, who she is, what she wants, how mad she may have gone etc.

Zulya has sent me several versions of voices/characters and I've picked one to go with (the second character on the attached audio file in the Downloads list)

Next we will hopefully record some video.

I'll work on SFX for the AR scene.

Downloads:

-

Download File: narration-test-3-.mp3

About This Work

By Rachael Thompson

Email Rachael Thompson

Published On: 13/11/2020

academic:

mediums:

scopes:

tags:

AR, ACI Studio 2, XR, mobile