Theme

Play and Sound

Context (Inspiration)

In the 'Introduction to Critical Play' (chapter 1 of the 'Critical Play' written by Mary Flanagan), critical play is a type of play that could 'function as means for creative expression, as instruments for conceptual thinking, or as tools to help examine or work through social issues' besides the general concept of providing entertainments to its user (Flanagan 2009).

Combining with the 'Playable Room' idea I had from last week, I wanted to see if I could develop an interactive artwork that tackles the issue of people's lack of movement during the lockdown.

Sounds in Space, an experimental project created by Google Creative Lab, allows its designer to place sound in real-life locations. When the user (the audience) wears the specifically designed kit and walks around the area, the phone will pick up the sign and transform it into what the user will hear in that space. The only thing the user does is walking around the area, The user doesn't need to tap on the phone to activate or trigger anything, thus minimising the use of technology. This project gives me the idea of locating sound in real-life locations using AR.

Symons's Aura gives me the idea of having interactive animation between the sound objects that are placed in the environment. Instead of having the 'collision of sound' played when the users interact, I would like to have the sound objects interact visually when they are placed close enough. This is a possible idea for the future though, as I aim to tackle the main mechanism of trying to place the sound in real-life locations for the prototype.

Method

When building the interactive artwork for this week, I tried the 'coding for computer then transferring to the code for AR' workflow. It failed horribly, mainly because I didn't take into account the laggy plane detection function of AR Foundation and AR Core on my phone. However, the process of coding the interactive artwork for the computer has allowed me to understand a few concepts of C# coding better before trying to recreate the same effect for the AR version. For example, I was able to settle the code for the pitch and the volume of the sound quicker because I already had the basic knowledge of how to change them in C#. Accessing the function of a script from another script was also one problem I learnt to solve when coding for the computer version of the interactive artwork.

As the basic idea is still the same, I switched my idea from trying to build a wall-based instrument to a ground-based interactive choir. It just that the vertical plane detection isn't working as good as the horizontal plane detection on my phone. Moreover, I realised that the ground-based interactive choir idea actually works out better with my idea of trying to tackle the lack of movement issues during the lockdown.

During my time coding, I also understand the difference between an array and a list better. When looking up tutorials online to spawn 3D objects in the detected plane in AR, they constantly used a list to store all the game objects. It then allows them to add an infinite amount of game objects. As I am intended to set a limit to the amount of game object (choir member) the user could add to the scene to optimise the size of the interactive artwork, I used an array. By doing so, I could set a limit to the amount of spawned game object (choir member) and allow the space in the array to be reused when trying to add another game object (choir member) after one is deleted.

For the pitch of the sound, I was initially using the 'audiosource.pitch' code in C#. Although it could shift the pitch of the sound, it also affects the length of the sound as changing the pitch of the sound essentially means changing the frequency of the original sound. In the end, I resorted to recording 8 notes in an octave and used the distance between the camera and the tap location when tapping to determine which note it would be. The further away the tap location is from the camera, the higher the pitch. Therefore, to get the pitch the user wants, he or she has to navigate in their own room and find the perfect distance. Ideally, this will then encourage the user to move around and solve the lack of movement problems.

The volume of the choir member is going to be louder if the camera is getting closer to the choir member. This is my approach to try to recreate the feeling of being in a real-life choir performance. It's a shame that I couldn't recreate the stereo sound effect with only the speaker of my phone as space is 3D and the speaker could only provide so much.

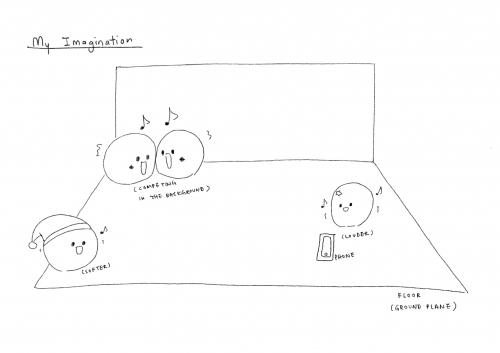

This is it for the prototype. However, I wish to include the interactive animation part of this interactive artwork in the future. For example, when two choir members are placed near to each other, they will interact with each other, either by pushing each other around and fighting for more attention from the user, or sing and dance around harmoniously. For now, the user could add them really close together and nothing will happen. The rotation value of the choir member could also be affected by the location of the camera so that the choir members are constantly staring into the camera. The image in the post is an example of my imagination.

Response

This week's response is an AR interactive choir artwork. I focused more on the interactive sound and user movement instead of the animation part. However, there is a plan to develop interactive animation for this project in the future. It just that the interactive animation aspect is not the fundamentals and not doing it will not change the base of the interactive artwork.

The video is an example of how to play with the interactive artwork. A .apk file is attached to this post in a zip folder so that you could download it and give it a play! Have fun directing a choir!

Reflection

The lack of movement problems people develop during the lockdown period is the issue I want to tackle within this week's response as that is my approach to critical play. Although the issue is not a social issue so to speak, it is kind of happening to everyone during the lockdown. The cooped-up feeling we feel will eventually lead to some down or depressing moments and, therefore, decrease our desire to move around. It might affect some people's health as a result.

'According to Lefebvre, it is through everyday habits, and through the body, that people experience the urban space.' (Flanagan 2009) As going out and exploring the city is not an available option for now, the only place people could work on experience is their own room space. I want to try to work on an interactive art that allows the users to reengage with their room and thus reclaim the space through their body movement as input and the sound as output. I was hoping that through playing with this interactive artwork, people will be encouraged to walk around their room and listen to some music they created through the tapping action. Hence, it might be able to solve the lack of movement issues.

Although it's an interactive artwork that will need the user to explore the space, it is a location-free design. This means that there is little to no requirement at all for the user to enjoy the interactive artwork. As long as there is a plane the phone could detect, they could play with the artwork wherever they want, hence, regaining the happiness of moving around. The users could also reconstruct the connection between their room and themselves, seeing that a room is no longer a room but their playground to figure out the best placement for the choir members.

I noticed one problem when reading the 'Artists' Locative Games' chapter in 'Critical Play' though. When the games are designed in an urban space, people could involuntarily be dragged into the games and eventually have fun participating. Even though it could also affect the lives of people who have no desire to play, they have the option of doing so. As part of the critical play is about solving social issues, the idea of exposing the game to people that are aware or unaware of the issue will help increase the possibility of solving the problem. Designing this location-free interactive artwork in a room essentially stops the possibility of having someone else participate in it. Moreover, it will need one to have the desire to download and install the interactive artwork before one could play with it. I was just wondering if there is a way to fix the exposure problem. Maybe I should design something for the Zoom or Teams meeting so that I could potentially share the concept behind each design to more people.

References

Flanagan, M. (2009). Critical Play: Radical Game Design. The MIT Press.

Downloads:

-

Download File: play-and-sound-apk-file.zip

About This Work

By Yee Hui Wong

Email Yee Hui Wong

Published On: 22/09/2020